Is Looker Dead?

Looker and Tableau announced a partnership and you won't believe what happened next!

I had promised myself I would never write an article with the (clickbait?) motif 'X is dead, long live X'. But the temptation was killing me. It seems our industry really has a morbid fetish. Dashboards are dead. Is BI dead? Yes, BI dead. But is Google BI dead? Well, Big Data is dead, Hadoop is dead, data lakes are dead (poor data fish), even data itself is dead! But yeah, long live data, dear peasants! I challenge you to find a data topic that is not dead if you search on Google. And if you do, don't forget the teachings of Keynes: "in the long run, we are all dead". The data topic du jour is only a data topic waiting to become dead under the keystrokes of a high-falutin' Substack writer.

So today we talk Looker.

What is going on here?

Like many of you, I have followed the announcement last week of the new friendship between the two giants in our space, Google's Looker and Salesforce's Tableau. And like many of you, I have also wondered: "what is going on here?"

For those who didn't catch what was announced, here is a brief summary: Tableau users will be able to connect to Looker, using it as a "semantic layer" or a "data source" (however you want to call it).

Is this Looker getting back to its roots of "semantic layer" and acknowledging that it is better to focus on that than lose space to a new generation of dbt-powered metrics layers? Will Google send Looker's visualizations to its infamous graveyard and make it "headless" instead? Or is this just two competitors deciding to cooperate, so they can avoid "either-or" discussions?

Some people will say this is nothing more than connecting to a new data source, like Tableau connecting to Microsoft SSAS. And that the "metrics layer" is just again our industry's tendency to give a new name to old clothes: Millennials are reinventing MDX.

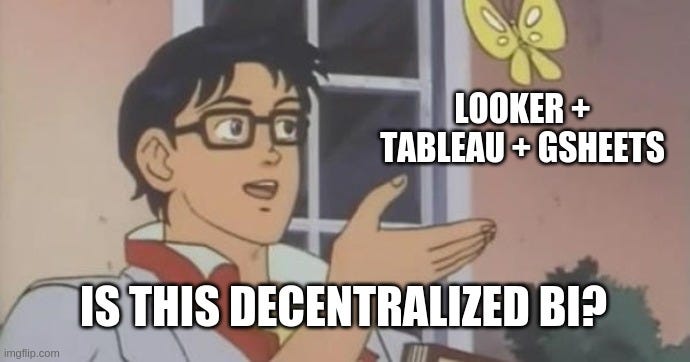

What I think is especially interesting is the confirmation of a trend to move the "metrics layer" further away from the presentation layer and promote a decentralized "BI" environment. I found the demo of Google Sheets using Looker behind the scenes and then Tableau using again the same Looker model quite interesting. Not in the sense of "I'm gonna buy a Tableau and a Looker license" interesting, but as a demonstration of how powerful centralized definitions combined with decentralized presentation layers can be.

Feels kinda natural being able to seamlessly use the right tool for the right job without the burden of maintenance. The flexibility (and familiarity) of GSheets formulas when you need them, connected to your real consistent live centralized data. The pixel-perfect visualizations of Tableau to tell a data story with the same data. And a world of opportunities for data apps that will come to fulfill our needs, be it a data alert app based on your KPIs, self-service analytics over a question answering interface or root cause analysis based on your data.

Well, this all sounds great for Looker. Why all the FUD then, JP?

👀

So, it's Friday. Benn Stancil comes in with his hot take on the whole topic, well worth a read. Data Twitter is discussing the post. And suddenly dbt announces a new feature request created by its CPO: "dbt should know about metrics". And this Tim guy and I thought the exact same thing:

Maybe not by sheer coincidence, Tim himself had also written an interesting article in 2019 on how he moved many of the things he had in Looker to dbt and the benefits experienced. If you use Looker and dbt, you may hear a lot of echoes when reading his article.

"How to decide what to model in dbt vs. LookML?"

Tristan (CEO of dbt labs) wrote an important article in early 2018 to answer a common question Looker users had when first coming in contact with dbt: "Ok, what do I do here and what do I do there?". What is the best practice and why? And it can be basically summed up as: dimensional modelling in dbt, metrics modelling in LookML.

But with this move from dbt on Friday, it seems we may end up with metrics modelling in dbt as well. And there is quite some buzz around it already. Fivetran's CEO George Fraser is already a fan: "I would love for dbt to support metrics, mostly so that we could include metric definitions in Fivetran dbt packages." And the same feeling is shared as well by Snowplow's CEO Alexander Dean. It feels right to have your metrics definition close to where you define your dimensions, centralized and in a place not locked-in to a specific vendor. The beauty of open standards is that it creates this neutral ground where everyone can meet and feel free to build on top. dbt is becoming the Switzerland of our data stack.

Ok, so what about Looker?

According to Benn, Google's announcement last week was Looker = LookML. But here is the elephant in the room. If it is not about dimensional modelling anymore and, from the amount of excitement around dbt metrics, it seems we may prefer to do metrics modelling in dbt as well, then what's left? Is it about the "API" part? But then why not use a SQL (or SQL-like) interface that implements the metrics like Metriql (or Transform) does? Or maybe a dbt-centric BI will just import the metrics definition from dbt and use their own way of querying, fulfilling a kind of "contract" with dbt: on one side, dbt defines the metrics and expectations; on the other side, the BI uses the definitions for querying, but guaranteeing correctness according to the expectations. Will BI vendors join in? I know we at Veezoo are happy to join the party and Lightdash (the folks behind dbt2looker) already started with metrics in dbt before it was cool.

All in all, it feels to me that Looker is slowly being squeezed, becoming more and more like a thin layer. And the thinner it gets, the less lock-in there is and the more competition it will face. Yes, it is possible that it becomes exactly that, building on top of dbt and becoming an open-source framework, like Benn put it. Or maybe it will offer a great integration with the rest of the Google data stack. Still, if that's Looker in the end, then it feels like just a shadow of what it used to be.

Interlude: How far do we go with dbt?

So, is it dbt all the way? Is dbt really the right place for a metrics layer? Is there a difference between a metrics layer and a semantic layer?1 And how far do we go with pushing things down to dbt?

It feels right to have metrics in dbt. Having them in dbt packages also seem neat. And we should probably not be talking about dbt as a metrics layer per se as defined by Benn, but more like a metrics repository, since it doesn't look like we will get an API to fetch the metrics results or metrics SQL query. If dbt wants to be the best place to define metrics, then I wonder how far it will need to go down the semantic modelling rabbit hole. Metrics can be defined without any care for semantics, sure. But if we have one model with order ids and we want to talk about number of orders (or whatever other metric), we will have to count them. If in a different model, we have a pre-aggregated number_of_orders for each month, it should still be the same metric, even though here you will need to sum them up2. And maybe you have orders in other models as well, is the metric defined for each model or maybe it depends on a specific column name or you provide a mapping each time? Is there a need to define more higher-level types so we can get better metrics definitions (maybe even a metrics package) or are these superfluous for our pragmatic needs of a non-semantic metrics layer? How deep would this rabbit hole go to make dbt more semantic? And if we are on it, will we start experimenting on putting more things down to dbt? Is there space for a standard reporting definition layer, maybe abusing dbt's exposures? How far do we go with dbt? Where do we draw its boundaries?

"The reports of my death are greatly exaggerated"

Maybe all of this is just me rambling high-falutin, speculative non-sense and Looker is not dead, just reinventing itself. Benn talked about an open-source Looker and on Wednesday this week we got a new open-source data language from Looker: Malloy. It just added more questions to my head.

What is Looker doing here now? A side project? Why not double down on an open-source LookML? Why GPL-2 license? Is this just a new language for a notebook environment? It seems it's about defining models, dimensions, metrics, visualizations, dashboards, but first and foremost querying data. Is Looker really going to be split along the modelling vs presentation layer axis like Benn said? Or do they see themselves as the metrics/semantic + BI definition layer and let the others do the presentation/interpretation of these dashboard files etc?

I guess this is where I finish my article, before a new announcement comes or I get a headache. And like any other "Is X dead?" article, I end with an underwhelming: dead or not, future Looker will certainly be... different.

Maybe this deserves a separate article.

Ironically, the example I originally gave (number of customers per day) was wrong as Maxime pointed out in the comment section below (count distinct would be non-additive in that case) and just comes to show the importance of a metrics layer: not computing metrics by yourself. Thanks, Maxime!

> even though here you will need to sum them up

Careful! Once you materialize `COUNT(DISTINCT customer_id)`, it becomes non-additive!